This website is dedicated to help hard- and software developers to take best advantage from existing Open Source tools and sourcecodes. It collects valuable resources and presents them in a single web-site, linking to external sites where appropriate.

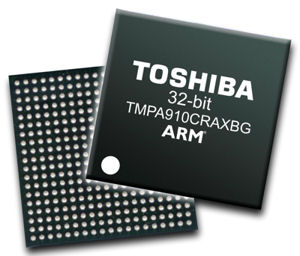

We have created an OpenEmbedded Core repository. Currently the TopasA900 is the only supported device, but you can build kernel and images for it already. We are targeting to support additional devices in future.

The Getting Started documentation has ben updated. We have added section about installing U-Boot using OpenOCD now.

A new software release which consists of U-Boot, Linux and file systems is available for download. Read the complete announcement here.