Diese Webseiten sollen Hard- und Software-Entwicklern helfen, den größtmöglichen Nutzen aus dem immensen Erfahrungsschatz der freien Software zu ziehen. Es werden dazu zum einen Informationen lokal gesammelt aber auch, dort wo es sinnvoll erscheint, auf externe Internet Quellen verwiesen.

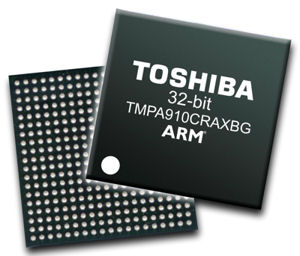

Auf dem Git-Server entsteht ein Repository für OpenEmbedded Core für TX09-Geräte. Unterstützt wird derzeit nur das TopasA900 für das sich bereits Kernel und Dateisysteme bauen lassen. Ziel ist es mittelfristig weitere Boards zu unterstützen.

Die Erste Schritte Dokumentation wurde aktualisiert. Neu ist ein Kapitel zur Installation von U-Boot mittels OpenOCD.

Ein neues Software-Release bestehend aus U-Boot, Linux und Dateisystemen steht zum Download zur Verfügung. Die komplette Ankündigung ist hier zu lesen.